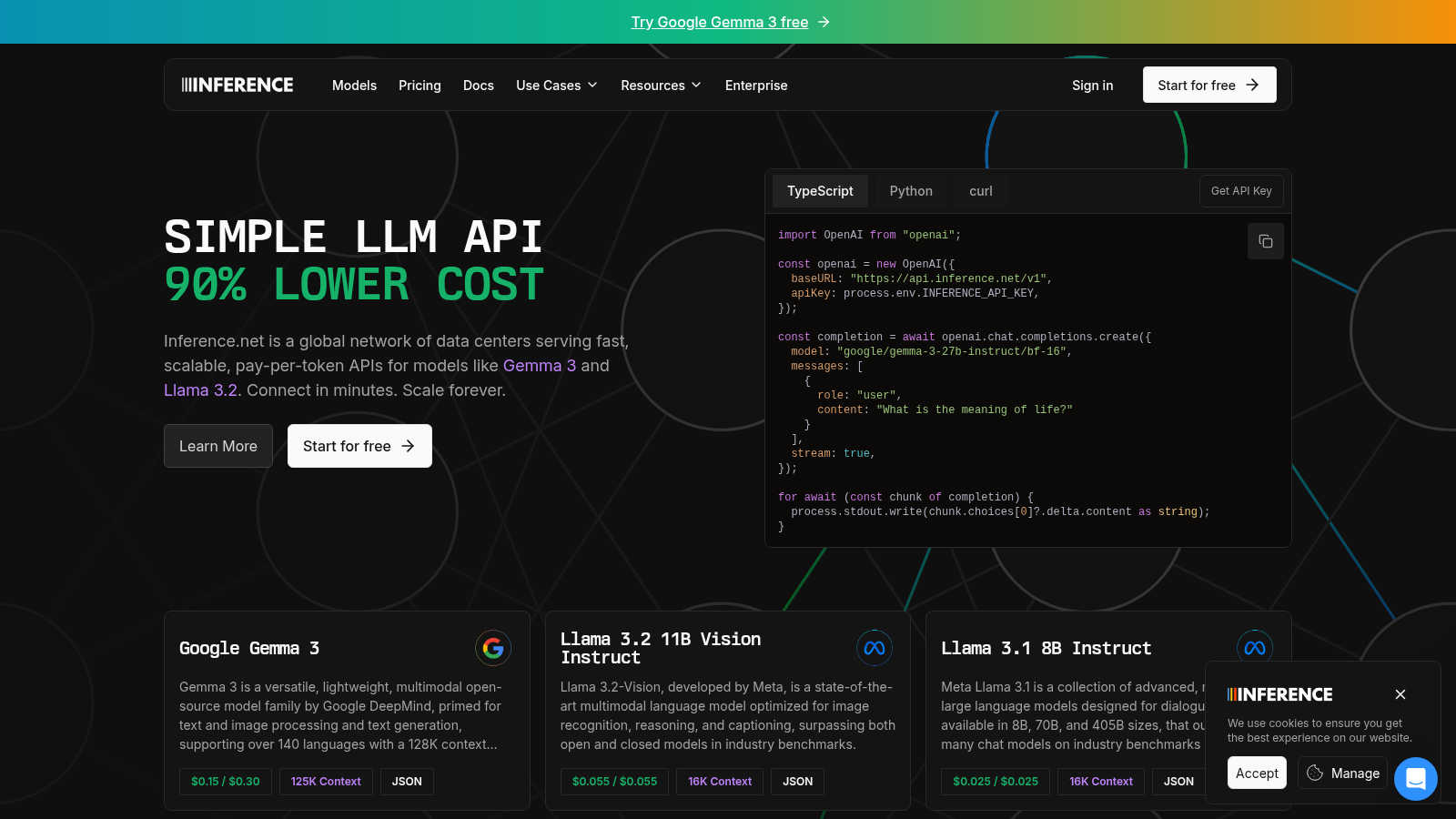

Inference.net

AI inference platform offering cost-effective, powerful API solutions for developers' AI applications.

VisitPublished on:

March 24, 2025

About Inference.net

Inference offers fast, affordable AI inference with premium models like DeepSeek R1 and Llama 3.3. Developers save up to 90% on costs while enjoying simple APIs, OpenAI-compatible SDKs, and powerful capabilities for real-time chat, batch processing, and data extraction. Setup takes minutes with seamless scaling for any project size.

You may also like:

Runway Aleph

Experience the future of video creation with Runway Aleph, a state-of-the-art in-context video model that transforms how you edit, generate, and manip

ThinkSound - AI Video-to-Audio Generator

Transform any video into immersive audio experiences with AI-powered sound generation and editing.

JobMojito

Real-time AI avatar interviews, branded portals, smart scoring, and multilingual support to help you automate and scale hiring.