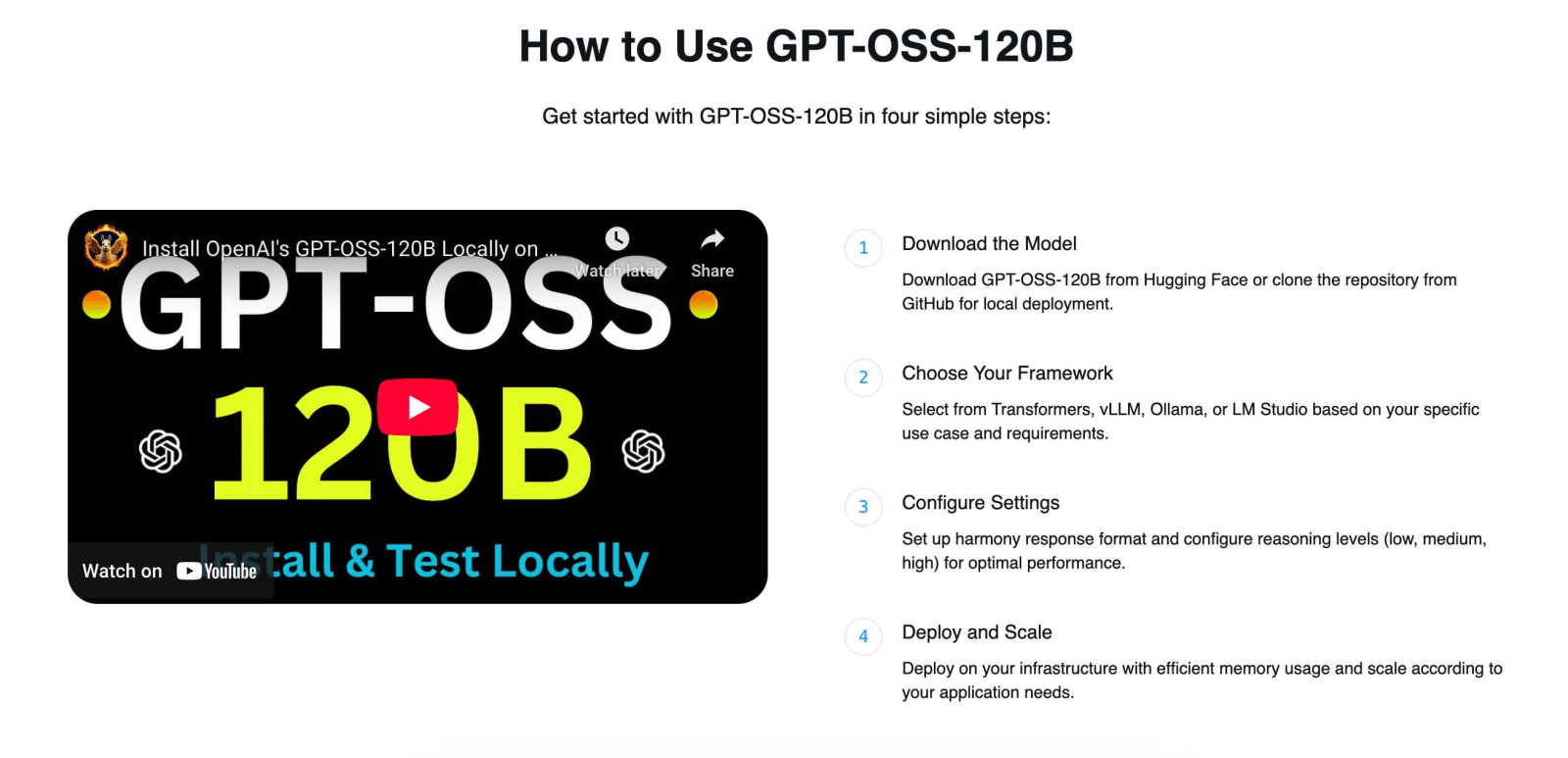

GPT-OSS-120B

GPT-OSS-120B is a powerful open AI model for advanced reasoning and commercial use.

Visit

About GPT-OSS-120B

GPT-OSS-120B is a revolutionary open-weight language model from OpenAI, designed to democratize access to top-tier AI reasoning. Think of it as a massive brain with 117 billion parameters, but one that's cleverly designed to be both powerful and surprisingly practical to use. Its secret sauce is the Mixture-of-Experts (MoE) architecture, which allows it to activate only about 5.1 billion parameters for any given task. This smart design means you can run this state-of-the-art model on a single high-end 80GB GPU, making advanced AI more accessible than ever before. Released under the fully permissive Apache 2.0 license, it grants you complete freedom for commercial use, modification, and sharing. Whether you're a researcher exploring the frontiers of machine intelligence, a developer crafting innovative AI applications, or a business looking to integrate sophisticated analysis and problem-solving into your products, GPT-OSS-120B is your versatile toolkit. It combines cutting-edge performance in areas like math and health reasoning with operational efficiency and true open-source liberty, empowering you to build the future.

Features of GPT-OSS-120B

Mixture-of-Experts (MoE) Architecture

This isn't your typical giant model. GPT-OSS-120B uses a sophisticated Mixture-of-Experts design with 128 total experts. For each piece of text it processes, it dynamically selects only the 4 most relevant experts to activate. This means that while the model has a massive 117 billion parameters in total, it only uses about 5.1 billion per token. The result is a model that thinks like a heavyweight but runs with the efficiency of a much smaller one, saving significant computational resources.

Fully Permissive Apache 2.0 License

Open-source freedom is at the core of this model. The Apache 2.0 license removes the usual barriers, allowing you to use GPT-OSS-120B for any purpose—commercial or personal—without restrictive fees or usage caps. You can modify the model, integrate it into your proprietary software, and even redistribute your versions. This opens up incredible possibilities for businesses and developers to innovate without legal uncertainty.

Advanced Chain-of-Thought Reasoning

GPT-OSS-120B is built for complex problem-solving. It has native Chain-of-Thought (CoT) capabilities, meaning it can break down difficult questions into logical, step-by-step reasoning. You can even configure the reasoning intensity (low, medium, high) to match your task, from quick answers to deep, analytical processes. This makes it excel in benchmarks for mathematics, coding, and scientific reasoning.

Efficient MXFP4 Quantization & Tool Use

Ready for real-world deployment, the model uses MXFP4 quantization specifically optimized for its MoE layers, dramatically reducing memory requirements while maintaining strong performance. Furthermore, it comes with native tool integration, allowing it to perform web searches, execute Python code, and call custom functions. This turns the model from a passive text generator into an active agent capable of interacting with the world.

Use Cases of GPT-OSS-120B

AI Research and Development

For researchers and ML engineers, GPT-OSS-120B is a fantastic sandbox. You can study its advanced MoE architecture, experiment with fine-tuning techniques on a state-of-the-art model, and push the boundaries of what's possible in machine reasoning—all without licensing restrictions. It serves as both a powerful baseline and a flexible platform for innovation.

Building Commercial AI Applications

Developers can integrate this powerful reasoning engine directly into their products. Whether you're creating an advanced coding assistant, a sophisticated customer support chatbot, or a complex data analysis tool, the Apache 2.0 license gives you the green light to build and sell your application with the model at its core, all while keeping costs predictable.

Complex Analysis and Problem-Solving

Businesses and analysts can leverage the model's strong reasoning for deep-dive tasks. Use it to parse lengthy financial reports, generate insights from technical documentation, solve intricate operational problems, or even tackle advanced competition-level math questions. Its 128k context window is perfect for these long, complex documents.

Local and Private Deployment

For projects where data privacy and security are paramount, GPT-OSS-120B can be deployed on your own infrastructure. Run it on a local server or a high-end desktop (like a Framework laptop with an 80GB GPU) to ensure sensitive information never leaves your control, while still benefiting from world-class AI capabilities.

Frequently Asked Questions

What hardware do I need to run GPT-OSS-120B?

Thanks to its efficient MoE architecture and MXFP4 quantization, you can run the quantized version of GPT-OSS-120B on a single GPU with 80GB of VRAM, such as an NVIDIA H100 or A100. This makes it surprisingly accessible for a model of its size, allowing for local or private server deployment.

How does the Mixture-of-Experts architecture make it efficient?

Imagine having a team of 128 specialists, but only calling a meeting with the 4 most relevant experts for each specific problem. That's how MoE works. While the entire "team" (the full 117B parameters) is available, the model only activates a small subset (5.1B parameters) per token. This selective activation drastically reduces the computational cost during inference.

Can I use GPT-OSS-120B for my commercial product?

Absolutely! This is one of its biggest advantages. The model is released under the Apache 2.0 license, which is fully permissive. You are free to use, modify, and distribute it—including within commercial products—without needing to pay licensing fees to OpenAI or share your proprietary code.

How does its performance compare to other models?

Independent benchmarks show GPT-OSS-120B achieves near-parity with models like o4-mini on core reasoning tasks and excels in specific areas like HealthBench and competition math. It is recognized as a top-tier open-weight model from the US, offering an excellent balance of high intelligence and operational efficiency compared to other large open models.

Explore more in this category:

Top Alternatives to GPT-OSS-120B

Smart Comparison Widget

Smart Comparison Widget helps your customers pick the perfect product by comparing features side by side, boosting sales and cutting returns.

Game Server Backend

Game Server Backend unifies player auth, data, leaderboards, and server hosting into one simple API for multiplayer games.

AllInOneTools

AllInOneTools is a free online toolbox with over 100 PDF, image, text, SEO, and developer tools that work instantly without any signup required.

FoundStep

FoundStep helps solo developers and indie hackers validate ideas, define project scope, and maintain a clear record of progress.

BeVisible

BeVisible automates your content creation, boosting your Google rankings and AI mentions with daily optimized articles tailored for success.