diffray vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

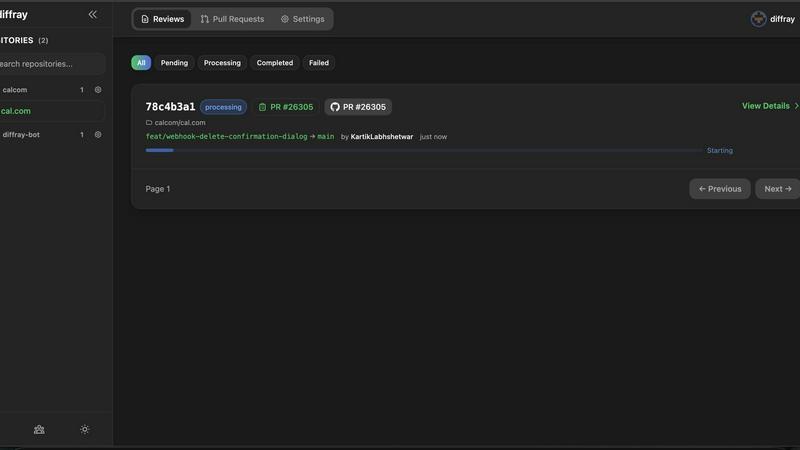

diffray

Diffray uses multi-agent AI to review your code, catching real bugs with far fewer false alarms.

Last updated: February 28, 2026

OpenMark AI lets you benchmark over 100 AI models for cost, speed, quality, and stability tailored to your specific tasks in minutes.

Last updated: March 26, 2026

Visual Comparison

diffray

OpenMark AI

Feature Comparison

diffray

Multi-Agent Specialized Architecture

Unlike tools that use one model for everything, diffray's powerhouse is its team of over 30 specialized AI agents. Think of it as having a dedicated security expert, a performance guru, a bug-detection specialist, and a best-practice coach all reviewing your code simultaneously. Each agent is finely tuned for its domain, which means feedback is highly relevant and deeply informed. This specialization is the key to minimizing generic, unhelpful comments and providing insights that are actually worth your time.

Context-Aware Code Analysis

diffray doesn't just look at lines of code in isolation. It understands the context of your entire codebase and the specific changes in your pull request. This allows it to distinguish between a legitimate issue and a false alarm that might be part of your project's unique patterns or architecture. By analyzing relationships and patterns, it delivers feedback that is accurate and actionable, telling you not just what might be wrong, but why it matters in your specific situation.

Drastic Reduction in False Positives

One of the most celebrated features is diffray's ability to cut false positives by 87%. This is a game-changer for developer experience. Instead of wasting precious time sifting through dozens of irrelevant warnings, your team can focus only on the signals that matter. This builds trust in the tool and ensures that when diffray highlights an issue, developers know it's something that genuinely requires attention, leading to faster and more confident reviews.

Comprehensive Issue Detection

While reducing noise, diffray simultaneously increases signal strength, identifying three times more real issues than conventional approaches. Its agents scan for a wide spectrum of concerns, from critical security flaws and memory leaks to subtle bugs, anti-patterns, and performance inefficiencies. This comprehensive coverage acts as a robust safety net, catching problems that might slip through manual review or be missed by less sophisticated tools.

OpenMark AI

Intuitive Task Description

OpenMark AI allows users to describe their benchmarking tasks using simple language. This user-friendly approach eliminates the need for technical jargon, making it accessible for teams of all skill levels. You can easily set up your desired tests without extensive prior knowledge.

Real-Time Model Comparison

The platform facilitates real-time comparisons of over 100 models, allowing you to run benchmarks across various tasks simultaneously. This feature provides immediate insights into which model performs best for your specific requirements, ensuring that you select the most suitable option for your needs.

Cost Efficiency Tracking

OpenMark AI emphasizes understanding the real costs associated with API calls. It provides detailed insights into the cost per request, helping users identify the best model that balances quality and affordability. This feature is particularly useful for teams looking to optimize their budgets while achieving high-quality outputs.

Consistency Checks

The platform includes tools to verify the consistency of model outputs across repeated runs. This is crucial for applications where reliability is key. By assessing how models perform under the same conditions multiple times, users can ensure that the selected model meets their stability requirements.

Use Cases

diffray

Accelerating Pull Request Workflows

Development teams can integrate diffray directly into their GitHub or GitLab workflows to act as a first-line reviewer. As soon as a pull request is opened, diffray's agents get to work, providing detailed, categorized feedback within minutes. This allows human reviewers to start their review with a clear list of potential issues already identified, cutting the average review time from 45 minutes to just 12 minutes and dramatically speeding up the merge process.

Onboarding New Team Members

For new developers joining a project, understanding the codebase's standards and catching subtle mistakes can be challenging. diffray serves as an always-available mentor, reviewing their code against the project's best practices and security norms. This provides immediate, constructive feedback, helps enforce consistency, and accelerates the onboarding process by teaching best practices through direct, contextual examples.

Enhancing Code Quality and Security

Teams aiming to proactively improve their code health and security posture use diffray as a continuous guardrail. With its specialized security and best-practice agents, it automatically scans every change for vulnerabilities like SQL injection, insecure dependencies, or sensitive data exposure. This shift-left approach embeds quality and security checks directly into the developer's workflow, preventing issues from reaching production.

Supporting Solo Developers and Freelancers

Even developers working alone benefit immensely from a second pair of "eyes." diffray acts as a reliable coding partner for freelancers and indie developers, offering expert-level reviews that would otherwise require a colleague. It helps catch bugs, optimize performance, and ensure clean code before delivery, increasing the quality and reliability of work delivered to clients without the need for a full team.

OpenMark AI

Model Selection for Development

Developers can utilize OpenMark AI to make informed choices about which AI model to integrate into their applications. By comparing the performance of multiple models on specific tasks, teams can select the one that aligns best with their project goals and user needs.

Cost-Benefit Analysis

Product teams can conduct thorough cost-benefit analyses to determine which model offers the best value for their investment. By examining real costs alongside performance metrics, teams can make strategic decisions that enhance their budget management and overall ROI.

Quality Assurance in AI Features

Quality assurance teams can leverage OpenMark AI to validate the outputs of AI features before they go live. By running tests and analyzing consistency, they can ensure that the model delivers expected results, reducing the risk of errors in production.

Academic Research and Experimentation

Researchers can use OpenMark AI to benchmark various models for academic purposes. By testing different LLMs on a range of tasks, researchers can contribute valuable insights into model performance and characteristics, aiding the broader AI community in understanding model capabilities.

Overview

About diffray

diffray is a revolutionary AI-powered code review assistant designed to transform how developers and engineering teams handle pull requests. At its core, diffray tackles the biggest pain point of traditional AI review tools: overwhelming noise and irrelevant feedback. Instead of relying on a single, generic AI model that often cries wolf, diffray employs an intelligent multi-agent architecture. This system features over 30 specialized AI agents, each an expert in a critical area like security vulnerabilities, performance bottlenecks, bug patterns, coding best practices, and even SEO for web projects. This targeted approach allows diffray to understand the specific context of your code changes, delivering precise, actionable insights. The result is a staggering 87% reduction in false positives while uncovering three times more genuine, critical issues. For teams, this means slashing the average time spent on pull request reviews from 45 minutes to just 12 minutes per week. diffray is built for development teams of all sizes who are serious about improving code quality, boosting team productivity, and fostering a more collaborative and efficient review process without the clutter and frustration of inaccurate alerts.

About OpenMark AI

OpenMark AI is a powerful web application designed specifically for task-level benchmarking of large language models (LLMs). It enables users to describe their testing requirements in plain language, run consistent prompts against a variety of models in a single session, and efficiently compare crucial metrics such as cost per request, latency, scored quality, and stability across multiple runs. This capability allows users to observe variance in outputs, moving beyond mere reliance on single, potentially unrepresentative results. Tailored for developers and product teams, OpenMark AI helps in making informed decisions about which model to validate before launching AI-driven features. With hosted benchmarking that operates using credits, there is no need to configure separate API keys for different models, making the testing process streamlined and user-friendly. By focusing on cost efficiency and consistent output quality, OpenMark AI is an essential tool for those who prioritize both performance and budget in their AI implementations.

Frequently Asked Questions

diffray FAQ

How does diffray achieve such a low false-positive rate?

diffray's multi-agent architecture is specifically designed for accuracy. Instead of one model trying to be an expert at everything, we have over 30 specialized agents. Each agent is an expert in a narrow field, like security or performance, and is trained to understand context. This deep specialization allows the system to make far more nuanced judgments, distinguishing between actual problems and acceptable code patterns, which leads to the 87% reduction in false alarms.

What programming languages and frameworks does diffray support?

diffray is built to support a wide range of modern programming languages and popular frameworks. While the exact list is continually expanding, it includes strong support for JavaScript/TypeScript, Python, Java, Go, Ruby, PHP, and their associated ecosystems and frameworks like React, Node.js, Django, and Spring. The best way to check for your specific stack is to connect your repository for a trial.

How does diffray integrate with our existing development tools?

diffray is designed for seamless integration into your existing workflow. It primarily connects directly with GitHub and GitLab, acting as a bot or app that automatically comments on your pull requests. There's no need to switch contexts or use a separate dashboard; the feedback appears right where your team already works, making adoption smooth and non-disruptive.

Is my source code kept private and secure with diffray?

Absolutely. Code security and privacy are our top priorities. diffray treats your code with the highest level of confidentiality. We use secure, encrypted connections for all data in transit, and we do not store your source code permanently after analysis. You retain full ownership of your code, and our systems are designed to analyze it only for the purpose of providing the review feedback you request.

OpenMark AI FAQ

What kind of models can I test with OpenMark AI?

OpenMark AI supports a large catalog of models, including those from OpenAI, Anthropic, Google, and more. This extensive selection allows users to benchmark a wide variety of LLMs to find the best fit for their specific tasks.

Do I need to set up API keys to use OpenMark AI?

No, OpenMark AI simplifies the benchmarking process by using hosted benchmarking that operates on credits. This means you do not need to configure separate API keys for different models, allowing for a smoother testing experience.

How does OpenMark AI ensure the accuracy of its results?

OpenMark AI performs real API calls to the models, providing side-by-side results based on actual outputs rather than cached or marketing numbers. This ensures that users receive accurate and relevant benchmarking data for their comparisons.

Are there any free trials or plans available for OpenMark AI?

Yes, OpenMark AI offers both free and paid plans, allowing users to explore the features and capabilities of the platform. Users can sign up to receive 50 free credits to start their benchmarking journey without any initial investment.

Alternatives

diffray Alternatives

diffray is an AI-powered code review tool that helps development teams catch bugs and improve code quality. It stands out in the category of developer productivity tools by using a specialized multi-agent system to provide precise, actionable feedback. Developers often explore alternatives for various reasons. Budget constraints, specific feature needs like integration with a particular tech stack, or a desire for a different user experience are common drivers. It's a normal part of finding the perfect tool fit for a team's unique workflow and goals. When evaluating other options, focus on what matters most for your team. Key considerations include the accuracy of feedback to avoid wasting time on false positives, how well the tool understands your existing codebase for relevant suggestions, and the overall impact on your team's review speed and collaboration.

OpenMark AI Alternatives

OpenMark AI is a cutting-edge web application designed for task-level benchmarking of large language models (LLMs). It enables users to compare over 100 models based on cost, speed, quality, and stability, making it an essential tool for developers and product teams seeking to validate or choose a model before integrating AI features into their products. By allowing users to run prompts in plain language without the need for multiple API keys, OpenMark AI simplifies the evaluation process. Users often seek alternatives to OpenMark AI for various reasons, including pricing structures, specific feature sets, or unique platform requirements. When searching for an alternative, it's crucial to consider factors such as the range of supported models, the ease of use of the interface, and whether the solution provides transparent performance metrics. Assessing these elements will help ensure that you find a benchmarking tool that aligns with your team's needs and project goals.