HookMesh vs OpenMark AI

Side-by-side comparison to help you choose the right AI tool.

HookMesh adds reliable webhook delivery and a customer portal to your SaaS in minutes.

Last updated: February 28, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

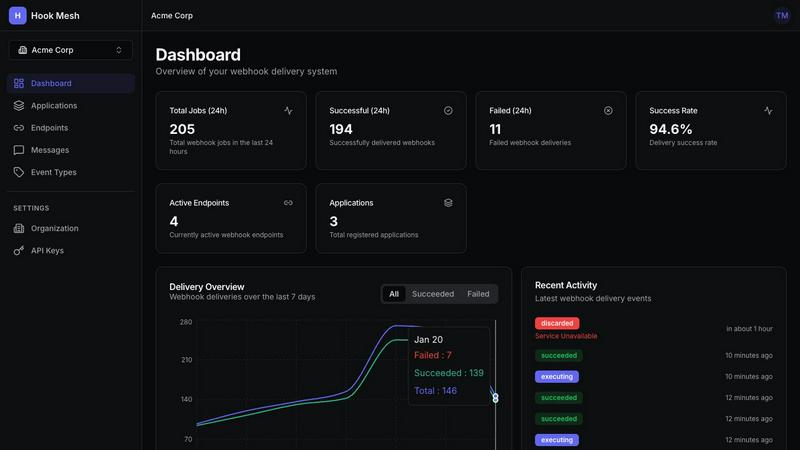

HookMesh

OpenMark AI

Overview

About HookMesh

HookMesh is a developer-first platform that takes the pain out of building and managing webhooks for SaaS products. If you've ever tried to build webhooks in-house, you know the hidden complexities: crafting robust retry logic, implementing circuit breakers, and spending countless hours debugging why a webhook didn't arrive. HookMesh solves all of that. It provides a battle-tested infrastructure that guarantees reliable webhook delivery so you can focus on your core product, not on infrastructure headaches. The platform is designed for developers and product teams who need to provide seamless, real-time integrations for their customers. Its standout value proposition is a powerful combination of "set it and forget it" reliability for you, and a self-service portal for your customers. This means your engineering team saves months of development time, while your end-users get full visibility into webhook deliveries, can manage their own endpoints, and replay failures with a single click. With HookMesh, you add enterprise-grade webhook functionality in minutes, not months, ensuring peace of mind for both your team and your customers.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.