OpenMark AI vs qtrl.ai

Side-by-side comparison to help you choose the right AI tool.

OpenMark AI lets you benchmark over 100 AI models for cost, speed, quality, and stability tailored to your specific tasks in minutes.

Last updated: March 26, 2026

qtrl.ai

qtrl.ai helps QA teams scale testing with AI agents while keeping full control and governance.

Last updated: February 27, 2026

Visual Comparison

OpenMark AI

qtrl.ai

Feature Comparison

OpenMark AI

Intuitive Task Description

OpenMark AI allows users to describe their benchmarking tasks using simple language. This user-friendly approach eliminates the need for technical jargon, making it accessible for teams of all skill levels. You can easily set up your desired tests without extensive prior knowledge.

Real-Time Model Comparison

The platform facilitates real-time comparisons of over 100 models, allowing you to run benchmarks across various tasks simultaneously. This feature provides immediate insights into which model performs best for your specific requirements, ensuring that you select the most suitable option for your needs.

Cost Efficiency Tracking

OpenMark AI emphasizes understanding the real costs associated with API calls. It provides detailed insights into the cost per request, helping users identify the best model that balances quality and affordability. This feature is particularly useful for teams looking to optimize their budgets while achieving high-quality outputs.

Consistency Checks

The platform includes tools to verify the consistency of model outputs across repeated runs. This is crucial for applications where reliability is key. By assessing how models perform under the same conditions multiple times, users can ensure that the selected model meets their stability requirements.

qtrl.ai

Enterprise-Grade Test Management

qtrl provides a robust, centralized system for all your testing artifacts. You can create and organize test cases, build detailed test plans, and execute structured test runs. Everything is linked for full traceability, allowing you to see exactly which requirements are covered by which tests. This creates clear audit trails and is built to support compliance needs, giving managers and stakeholders complete confidence in the testing process.

Autonomous QA Agents

This is the intelligent engine of qtrl. These AI-powered agents can execute high-level instructions on demand. You can describe a test scenario in natural language, like "test the checkout flow as a guest user," and the agent will run it in a real browser. They operate within your defined rules and can run continuously across different environments, providing scalable automation that adapts to your application's changes without constant manual script updates.

Progressive Automation Model

qtrl doesn't force you to jump into full AI automation. You start where you are comfortable, writing clear test instructions for the agents to follow. As trust builds, you can let qtrl suggest and generate new tests automatically based on coverage gaps or requirement changes. Every step is reviewable and approvable, ensuring your team always stays in the driver's seat while gradually increasing efficiency.

Governance by Design

Trust and control are foundational to qtrl. The platform offers permissioned autonomy levels, so you decide how much freedom the AI agents have. There are no black-box decisions; you get full visibility into what the agents are doing. Combined with enterprise-ready security, encrypted secrets management, and the fact that secrets are never exposed to the AI, qtrl provides the governance framework necessary for serious engineering teams to adopt AI confidently.

Use Cases

OpenMark AI

Model Selection for Development

Developers can utilize OpenMark AI to make informed choices about which AI model to integrate into their applications. By comparing the performance of multiple models on specific tasks, teams can select the one that aligns best with their project goals and user needs.

Cost-Benefit Analysis

Product teams can conduct thorough cost-benefit analyses to determine which model offers the best value for their investment. By examining real costs alongside performance metrics, teams can make strategic decisions that enhance their budget management and overall ROI.

Quality Assurance in AI Features

Quality assurance teams can leverage OpenMark AI to validate the outputs of AI features before they go live. By running tests and analyzing consistency, they can ensure that the model delivers expected results, reducing the risk of errors in production.

Academic Research and Experimentation

Researchers can use OpenMark AI to benchmark various models for academic purposes. By testing different LLMs on a range of tasks, researchers can contribute valuable insights into model performance and characteristics, aiding the broader AI community in understanding model capabilities.

qtrl.ai

Scaling Beyond Manual Testing

For QA teams overwhelmed by repetitive manual test cycles, qtrl offers a clear path forward. They can begin by documenting their existing manual tests as structured instructions in qtrl's management module. From there, they can progressively automate the most tedious flows using the AI agents, freeing up human testers for more complex exploratory work and dramatically increasing test coverage and execution speed.

Modernizing Legacy QA Workflows

Companies stuck with outdated, script-heavy automation frameworks can use qtrl to transition smoothly. Instead of maintaining brittle scripts, teams can leverage qtrl's adaptive memory and AI agents to generate more resilient tests. The platform integrates with existing CI/CD pipelines and tools, allowing for a gradual modernization without a disruptive, all-at-once overhaul of the current process.

Ensuring Governance in Enterprise QA

Large organizations with strict compliance and audit requirements need control alongside automation. qtrl's full traceability from requirement to test execution, combined with its permissioned autonomy and detailed audit logs, makes it ideal. Engineering leads can scale QA efforts with AI while providing auditors with clear evidence of what was tested, when, and what the outcome was.

Empowering Product-Led Engineering Teams

Development teams that practice continuous deployment need fast, reliable feedback on quality. qtrl integrates into their workflow, allowing developers to write high-level test instructions for features they build. The autonomous agents can then execute these tests across environments as part of the CI/CD process, providing continuous quality feedback without requiring developers to become experts in test automation frameworks.

Overview

About OpenMark AI

OpenMark AI is a powerful web application designed specifically for task-level benchmarking of large language models (LLMs). It enables users to describe their testing requirements in plain language, run consistent prompts against a variety of models in a single session, and efficiently compare crucial metrics such as cost per request, latency, scored quality, and stability across multiple runs. This capability allows users to observe variance in outputs, moving beyond mere reliance on single, potentially unrepresentative results. Tailored for developers and product teams, OpenMark AI helps in making informed decisions about which model to validate before launching AI-driven features. With hosted benchmarking that operates using credits, there is no need to configure separate API keys for different models, making the testing process streamlined and user-friendly. By focusing on cost efficiency and consistent output quality, OpenMark AI is an essential tool for those who prioritize both performance and budget in their AI implementations.

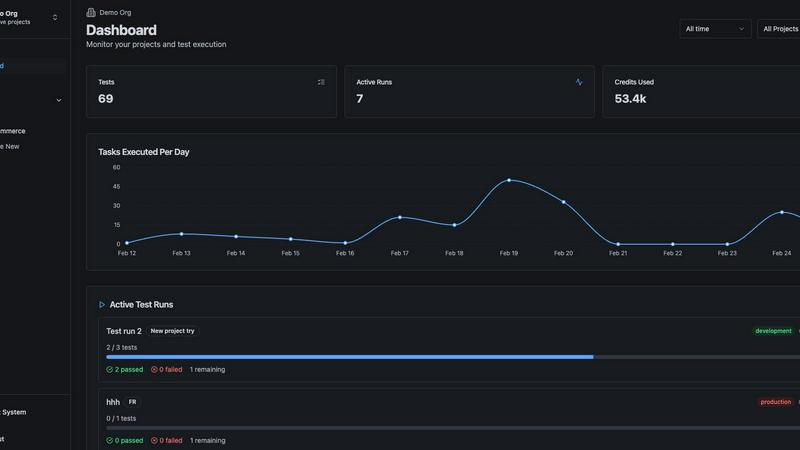

About qtrl.ai

qtrl.ai is a modern QA platform designed to help software teams scale their quality assurance efforts without sacrificing control or governance. It uniquely combines enterprise-grade test management with powerful, trustworthy AI automation. At its core, qtrl provides a centralized hub where teams can organize test cases, plan test runs, trace requirements to coverage, and track quality metrics through real-time dashboards. This structured foundation ensures clear visibility into what's been tested, what's passing, and where potential risks lie for engineering leads and QA managers.

Where qtrl truly stands apart is its progressive AI layer. Instead of forcing a risky, "black-box" AI-first approach, qtrl introduces intelligent automation gradually. Teams can start with simple manual test management and, when ready, leverage built-in autonomous agents. These agents can generate UI tests from plain English descriptions, maintain them as the application evolves, and execute them at scale across multiple browsers and environments. This makes qtrl perfect for product-led engineering teams, QA groups moving beyond manual testing, companies modernizing legacy workflows, and enterprises that require strict compliance and audit trails. Ultimately, qtrl's mission is to bridge the gap between the slow pace of manual testing and the brittle complexity of traditional automation, offering a trusted path to faster, more intelligent quality assurance.

Frequently Asked Questions

OpenMark AI FAQ

What kind of models can I test with OpenMark AI?

OpenMark AI supports a large catalog of models, including those from OpenAI, Anthropic, Google, and more. This extensive selection allows users to benchmark a wide variety of LLMs to find the best fit for their specific tasks.

Do I need to set up API keys to use OpenMark AI?

No, OpenMark AI simplifies the benchmarking process by using hosted benchmarking that operates on credits. This means you do not need to configure separate API keys for different models, allowing for a smoother testing experience.

How does OpenMark AI ensure the accuracy of its results?

OpenMark AI performs real API calls to the models, providing side-by-side results based on actual outputs rather than cached or marketing numbers. This ensures that users receive accurate and relevant benchmarking data for their comparisons.

Are there any free trials or plans available for OpenMark AI?

Yes, OpenMark AI offers both free and paid plans, allowing users to explore the features and capabilities of the platform. Users can sign up to receive 50 free credits to start their benchmarking journey without any initial investment.

qtrl.ai FAQ

How does qtrl's AI handle changes in my application's UI?

qtrl's autonomous agents are designed with adaptive memory. They build a living knowledge base of your application by learning from every exploration and test execution. When the UI changes, this context helps the AI understand the new structure. It can often adjust test steps automatically, and when it can't, it will flag the test for human review, making maintenance far less brittle than traditional coded automation.

Is my test data and application access secure with an AI agent?

Absolutely. Security and governance are core to qtrl's design. The platform uses enterprise-grade security practices. Crucially, any sensitive data like passwords or API keys are stored as encrypted environment secrets. These secrets are never exposed to the AI agent during execution; the system injects them securely, ensuring your credentials and data remain protected at all times.

Can I use qtrl if I only want test management without AI?

Yes, definitely. qtrl is built on a progressive automation model. You can use it solely as a powerful, structured test management platform from day one. The AI features are there to augment your workflow when you're ready. You can introduce AI-assisted test generation and execution at your own pace, starting with simple instruction-based execution and increasing autonomy over time.

How does qtrl integrate with our existing development tools?

qtrl is built to fit into real-world engineering workflows. It offers integrations for requirements management tools and full support for CI/CD pipelines. This means you can trigger test runs automatically from a pull request or a build, and feed results back into your monitoring dashboards. It's designed to work alongside your current toolchain, not replace it entirely.

Alternatives

OpenMark AI Alternatives

OpenMark AI is a cutting-edge web application designed for task-level benchmarking of large language models (LLMs). It enables users to compare over 100 models based on cost, speed, quality, and stability, making it an essential tool for developers and product teams seeking to validate or choose a model before integrating AI features into their products. By allowing users to run prompts in plain language without the need for multiple API keys, OpenMark AI simplifies the evaluation process. Users often seek alternatives to OpenMark AI for various reasons, including pricing structures, specific feature sets, or unique platform requirements. When searching for an alternative, it's crucial to consider factors such as the range of supported models, the ease of use of the interface, and whether the solution provides transparent performance metrics. Assessing these elements will help ensure that you find a benchmarking tool that aligns with your team's needs and project goals.

qtrl.ai Alternatives

qtrl.ai is an AI-powered QA platform in the test management and automation category. It helps teams organize tests, execute runs, and gain visibility into quality through structured data and real-time dashboards. Its standout feature is an AI layer that can generate and maintain UI tests from natural language. Users often explore alternatives for various reasons. These can include budget constraints, the need for different feature sets, or specific integration requirements with their existing development stack. Some teams might prioritize pure open-source tools or seek a solution focused solely on manual test case management without an automation component. When evaluating other options, consider your team's primary needs. Key factors include the platform's scalability, its support for both manual and automated testing workflows, the ease of integrating with your CI/CD pipeline, and the depth of reporting and analytics offered. The ideal tool should align with your current QA maturity while supporting your growth toward more advanced practices.