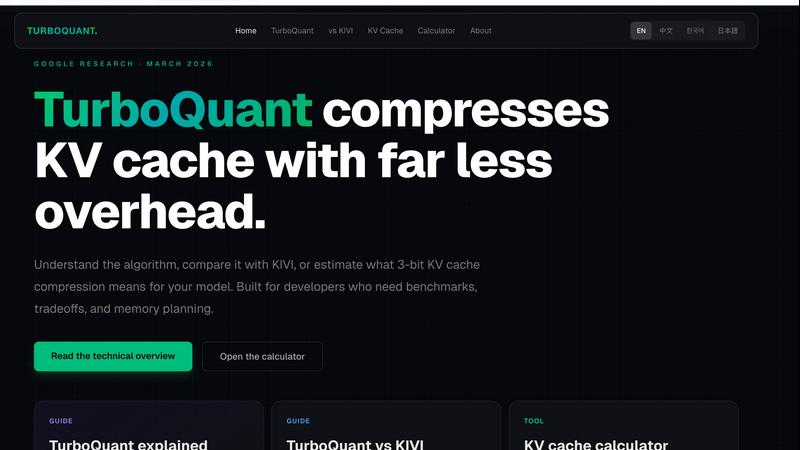

Google TurboQuant

Google TurboQuant compresses KV cache for LLM inference, achieving near-lossless results with significant memory and speed improvements.

Visit

About Google TurboQuant

Google TurboQuant is an innovative KV cache compression method designed specifically for large language model (LLM) inference. Developed by Google Research, it employs a two-stage approach to optimize memory usage and improve computational efficiency. The first stage, called PolarQuant, rotates input vectors into polar coordinates, making them easier to compress with scalar quantization. This step captures most of the compression benefits with minimal overhead. The second stage utilizes a 1-bit Quantized Johnson-Lindenstrauss transform (QJL) to correct residual errors, ensuring unbiased inner-product estimates critical for maintaining high attention quality. TurboQuant achieves impressive results, boasting a 6x reduction in KV cache size while maintaining zero accuracy loss in benchmark tests. This technology is ideal for organizations and developers working with large AI models, enabling them to minimize memory consumption and improve inference speed, particularly in scenarios where context length is extensive.

Features of Google TurboQuant

Two-Stage Compression Pipeline

TurboQuant utilizes a sophisticated two-stage compression pipeline that includes PolarQuant for polar-coordinate rotation and scalar quantization, followed by a QJL transform for error correction. This method significantly enhances the efficiency of KV cache storage.

Near-Lossless Compression

The compression technique employed by TurboQuant achieves near-lossless results at just 3 bits per channel. This allows for substantial memory savings without sacrificing the accuracy required for effective LLM performance in various applications.

Enhanced Inference Speed

TurboQuant can accelerate attention computations by up to 8 times when deployed on NVIDIA H100 GPUs in 4-bit mode. This speed enhancement is critical for applications that require rapid data processing and real-time responses.

Versatile Use Cases

TurboQuant is applicable across various model architectures such as MHA, GQA, and MQA. Its versatility makes it suitable for a wide range of applications, from natural language processing to complex data retrieval tasks.

Use Cases of Google TurboQuant

Large-Scale LLM Inference

Organizations leveraging large language models can benefit from TurboQuant's memory-efficient KV cache, enabling them to handle extensive context lengths without overwhelming GPU memory resources.

AI-Powered Search Applications

TurboQuant's efficient vector quantization allows for improved retrieval accuracy in AI-driven search applications, ensuring that users can quickly access relevant information even in large datasets.

Resource-Constrained Environments

In scenarios where computational resources are limited, TurboQuant provides a solution for deploying LLMs efficiently, making it easier to implement advanced AI systems on smaller hardware setups.

Research and Development

Researchers can utilize TurboQuant to benchmark different LLM architectures efficiently. The tool helps them explore the trade-offs between memory usage and computational speed, facilitating informed decisions in model selection and optimization.

Frequently Asked Questions

What is KV cache compression, and why is it important?

KV cache compression reduces the memory footprint of key-value pairs in LLMs, which is crucial because these caches can dominate GPU memory usage during inference. By compressing this data, TurboQuant allows for more efficient model deployment.

How does TurboQuant maintain accuracy during compression?

TurboQuant employs a two-stage compression approach that first reduces data size through PolarQuant and then corrects any residual errors using a 1-bit QJL transform. This ensures that the accuracy of the model remains intact, even after significant compression.

Can TurboQuant be used with any LLM architecture?

Yes, TurboQuant is designed to be versatile and can be applied to various model architectures, including MHA, GQA, and MQA. This makes it a valuable tool for many different AI applications.

What hardware is required to achieve the best performance with TurboQuant?

For optimal performance, TurboQuant is best utilized on NVIDIA H100 GPUs, where it can achieve up to 8x faster attention computations in 4-bit mode. However, it can still provide benefits on other hardware configurations, depending on the specific application and workload.

Explore more in this category:

Top Alternatives to Google TurboQuant

ScamZero

ScamZero provides real-time protection against fraud by detecting suspicious calls, texts, and links before they can harm you.

SEOAuthori

SEOAuthori streamlines your content creation with automated SEO workflows, delivering optimized articles ready for multi-language CMS export.

Receptri

Receptri is your 24/7 AI receptionist that answers calls and chats naturally, manages bookings, and learns from your website.

LLM Reference

LLM Reference helps tech leaders quickly find and compare the best AI models and providers for their specific project needs.

WC 2026 Betting Tips

WC 2026 Betting Tips offers AI-driven match analysis, odds context, and staking guidance for smart, responsible World Cup betting.

Football Prediction App

Football Prediction App delivers AI-driven win probabilities and score forecasts, empowering fans with data for informed match decisions.

Mind Elixir

Free, open-source, AI-powered mind mapping desktop app for organizing ideas and boosting productivity.